The Visual System

Learning Objectives

By the end of this section, you will be able to:

- Describe the basic anatomy of the visual system

- Discuss how rods and cones contribute to different aspects of vision

- Describe how monocular and binocular cues are used in the perception of depth

The visual system constructs a mental representation of the world around us (Figure 5.11). This contributes to our ability to successfully navigate through physical space and interact with important individuals and objects in our environments. This section will provide an overview of the basic anatomy and function of the visual system. In addition, we will explore our ability to perceive color and depth.

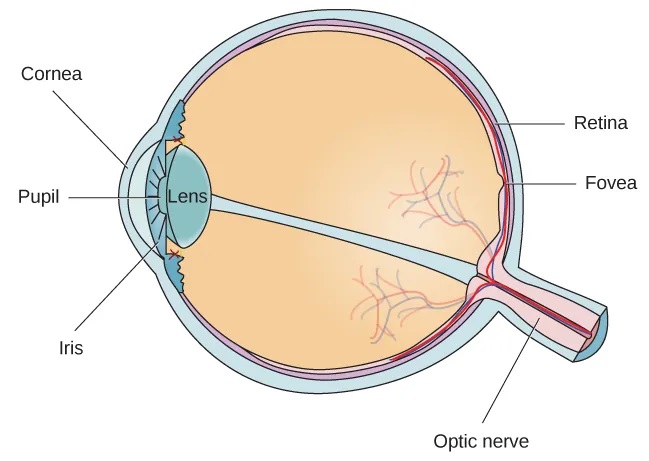

The eye is the major sensory organ involved in vision (Figure 5.12). Light waves are transmitted across the cornea and enter the eye through the pupil. The cornea is the transparent covering over the eye. It serves as a barrier between the inner eye and the outside world, and it is involved in focusing light waves that enter the eye. The pupil is the small opening in the eye through which light passes, and the size of the pupil can change as a function of light levels as well as emotional arousal. When light levels are low, the pupil will become dilated, or expanded, to allow more light to enter the eye. When light levels are high, the pupil will constrict, or become smaller, to reduce the amount of light that enters the eye. The pupil’s size is controlled by muscles that are connected to the iris, which is the colored portion of the eye.

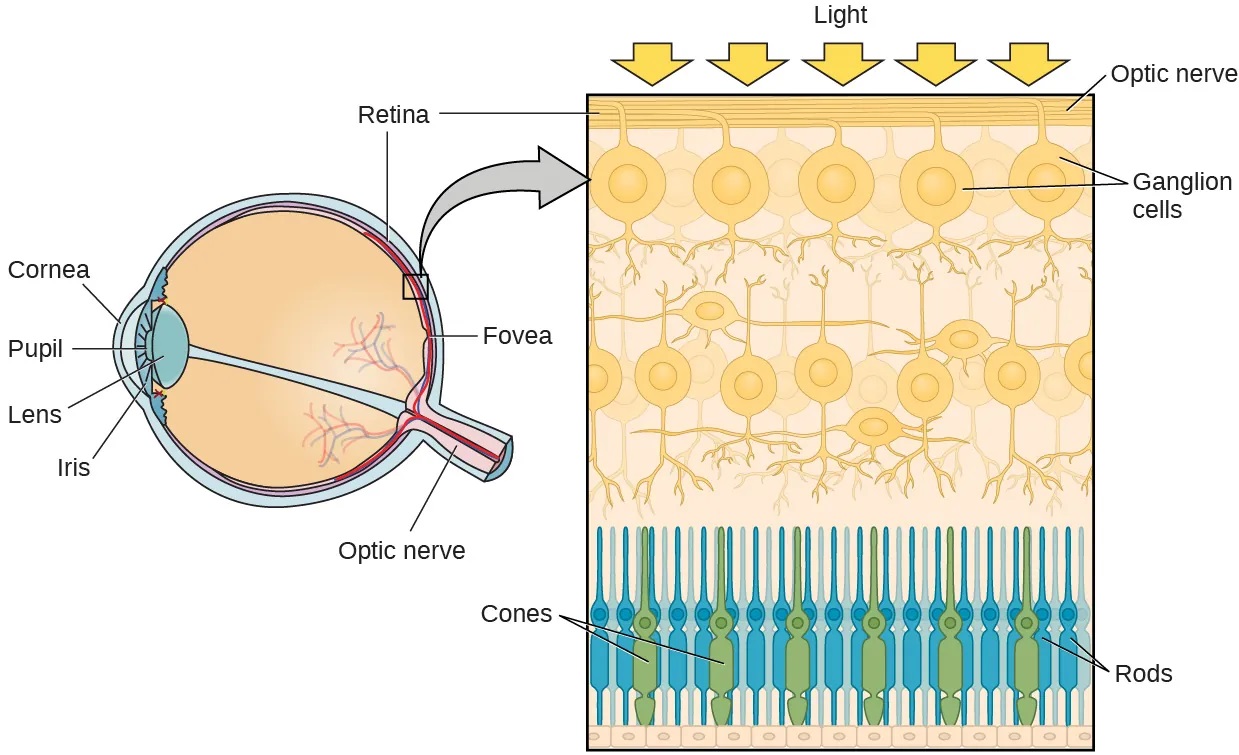

After passing through the pupil, light crosses the lens, a curved, transparent structure that serves to provide additional focus. The lens is attached to muscles that can change its shape to aid in focusing light that is reflected from near or far objects. In a normal-sighted individual, the lens will focus images perfectly on a small indentation in the back of the eye known as the fovea, which is part of the retina, the light-sensitive lining of the eye. The fovea contains densely packed specialized photoreceptor cells (Figure 5.13). These photoreceptor cells, known as cones, are light-detecting cells. The cones are specialized types of photoreceptors that work best in bright light conditions. Cones are very sensitive to acute detail and provide tremendous spatial resolution. They also are directly involved in our ability to perceive color.

While cones are concentrated in the fovea, where images tend to be focused, rods, another type of photoreceptor, are located throughout the remainder of the retina. Rods are specialized photoreceptors that work well in low light conditions, and while they lack the spatial resolution and color function of the cones, they are involved in our vision in dimly lit environments as well as in our perception of movement on the periphery of our visual field.

We have all experienced the different sensitivities of rods and cones when making the transition from a brightly lit environment to a dimly lit environment. Imagine going to see a blockbuster movie on a clear summer day. As you walk from the brightly lit lobby into the dark theater, you notice that you immediately have difficulty seeing much of anything. After a few minutes, you begin to adjust to the darkness and can see the interior of the theater. In the bright environment, your vision was dominated primarily by cone activity. As you move to the dark environment, rod activity dominates, but there is a delay in transitioning between the phases. If your rods do not transform light into nerve impulses as easily and efficiently as they should, you will have difficulty seeing in dim light, a condition known as night blindness.

Rods and cones are connected (via several interneurons) to retinal ganglion cells. Axons from the retinal ganglion cells converge and exit through the back of the eye to form the optic nerve. The optic nerve carries visual information from the retina to the brain. There is a point in the visual field called the blind spot: Even when light from a small object is focused on the blind spot, we do not see it. We are not consciously aware of our blind spots for two reasons: First, each eye gets a slightly different view of the visual field; therefore, the blind spots do not overlap. Second, our visual system fills in the blind spot so that although we cannot respond to visual information that occurs in that portion of the visual field, we are also not aware that information is missing.

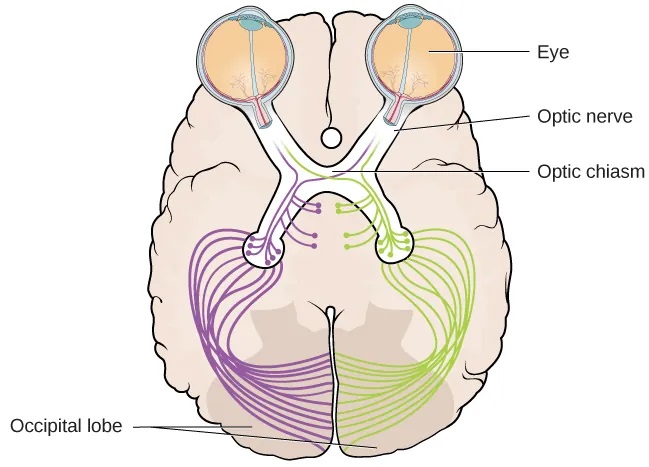

The optic nerve from each eye merges just below the brain at a point called the optic chiasm. As Figure 5.14 shows, the optic chiasm is an X-shaped structure that sits just below the cerebral cortex at the front of the brain. At the point of the optic chiasm, information from the right visual field (which comes from both eyes) is sent to the left side of the brain, and information from the left visual field is sent to the right side of the brain.

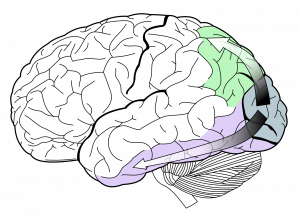

Once inside the brain, visual information is sent via a number of structures to the occipital lobe at the back of the brain for processing. Visual information might be processed in parallel pathways which can generally be described as the “what pathway” (ventral) and the “where/how” pathway (dorsal), see Figure 5.15. The “what pathway” is involved in object recognition and identification, while the “where/how pathway” is involved with location in space and how one might interact with a particular visual stimulus (Milner & Goodale, 2008; Ungerleider & Haxby, 1994). For example, when you see a ball rolling down the street, the “what pathway” identifies what the object is, and the “where/how pathway” identifies its location or movement in space.

WHAT DO YOU THINK? The Ethics of Research Using Animals

David Hubel and Torsten Wiesel were awarded the Nobel Prize in Medicine in 1981 for their research on the visual system. They collaborated for more than twenty years and made significant discoveries about the neurology of visual perception (Hubel & Wiesel, 1959, 1962, 1963, 1970; Wiesel & Hubel, 1963). They studied animals, mostly cats and monkeys. Although they used several techniques, they did considerable single unit recordings, during which tiny electrodes were inserted in the animal’s brain to determine when a single cell was activated. Among their many discoveries, they found that specific brain cells respond to lines with specific orientations (called ocular dominance), and they mapped the way those cells are arranged in areas of the visual cortex known as columns and hypercolumns.

In some of their research, they sutured one eye of newborn kittens closed and followed the development of the kittens’ vision. They discovered there was a critical period of development for vision. If kittens were deprived of input from one eye, other areas of their visual cortex filled in the area that was normally used by the eye that was sewn closed. In other words, neural connections that exist at birth can be lost if they are deprived of sensory input.

What do you think about sewing a kitten’s eye closed for research? To many animal advocates, this would seem brutal, abusive, and unethical. What if you could do research that would help ensure babies and children born with certain conditions could develop normal vision instead of becoming blind? Would you want that research done? Would you conduct that research, even if it meant causing some harm to cats? Would you think the same way if you were the parent of such a child? What if you worked at the animal shelter?

Like virtually every other industrialized nation, Canada permits medical experimentation on animals, with few limitations (assuming sufficient scientific justification). The goal of any laws that exist is not to ban such tests but rather to limit unnecessary animal suffering by establishing standards for the humane treatment and housing of animals in laboratories.

As explained by Stephen Latham, the director of the Interdisciplinary Center for Bioethics at Yale (2012), possible legal and regulatory approaches to animal testing vary on a continuum from strong government regulation and monitoring of all experimentation at one end, to a self-regulated approach that depends on the ethics of the researchers at the other end. The United Kingdom has the most significant regulatory scheme, whereas Japan uses the self-regulation approach. The U.S. and Canadian approach is somewhere in the middle, the result of a gradual blending of the two approaches.

There is no question that medical research is a valuable and important practice. The question is whether the use of animals is a necessary or even best practice for producing the most reliable results. Alternatives include the use of patient-drug databases, virtual drug trials, computer models and simulations, and noninvasive imaging techniques such as magnetic resonance imaging and computed tomography scans (“Animals in Science/Alternatives,” n.d.). Other techniques, such as microdosing, use humans not as test animals but as a means to improve the accuracy and reliability of test results. In vitro methods based on human cell and tissue cultures, stem cells, and genetic testing methods are also increasingly available.

In Canada, the CCAC (Canadian Council on Animal Care) oversees the care and use of animals in research. In order to receive federal funding, an institution must comply with, and be approved by, the CCAC to conduct research using animals. You can find out more about the CCAC on their website.

You can also read about animal research and how the CCAC is involved at Queen’s University, here.

Color and Depth Perception

We do not see the world in black and white; neither do we see it as two-dimensional (2-D) or flat (just height and width, no depth). Let’s look at how color vision works and how we perceive three dimensions (height, width, and depth).

Color Vision

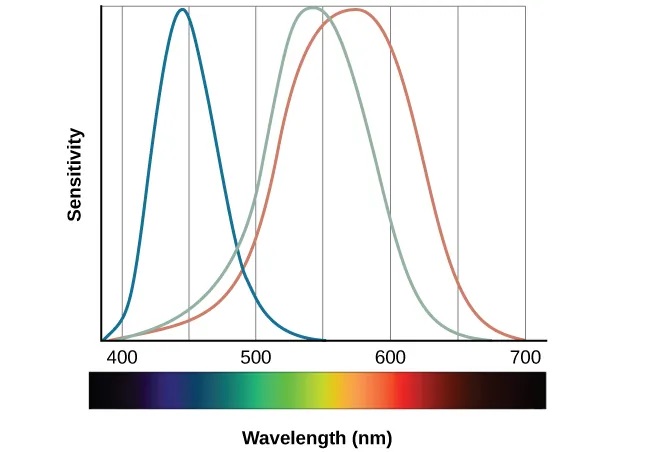

Normal-sighted individuals have three different types of cones that mediate color vision. Each of these cone types is maximally sensitive to a slightly different wavelength of light. According to the trichromatic theory of color vision, shown in Figure 5.16, all colors in the spectrum can be produced by combining red, green, and blue. The three types of cones are each receptive to one of the colors.

CONNECT THE CONCEPTS

Colorblindness: A Personal Story

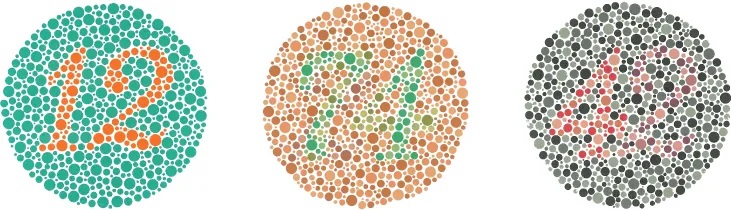

Several years ago, I dressed to go to a public function and walked into the kitchen where my 7-year-old daughter sat. She looked up at me, and in her most stern voice, said, “You can’t wear that.” I asked, “Why not?” and she informed me the colors of my clothes did not match. She had complained frequently that I was bad at matching my shirts, pants, and ties, but this time, she sounded especially alarmed. As a single father with no one else to ask at home, I drove us to the nearest convenience store and asked the store clerk if my clothes matched. She said my pants were a bright green color, my shirt was a reddish-orange, and my tie was brown. She looked at my quizzically and said, “No way do your clothes match.” Over the next few days, I started asking my coworkers and friends if my clothes matched. After several days of being told that my coworkers just thought I had “a really unique style,” I made an appointment with an eye doctor and was tested (Figure 5.17). It was then that I found out that I was colorblind. I cannot differentiate between most greens, browns, and reds. Fortunately, other than unknowingly being badly dressed, my colorblindness rarely harms my day-to-day life.

Some forms of color deficiency are rare. Seeing in grayscale (only shades of black and white) is extremely rare, and people who do so only have rods, which means they have very low visual acuity and cannot see very well. The most common X-linked inherited abnormality is red-green color blindness (Birch, 2012). Approximately 8% of males with European Caucasian descent, 5% of Asian males, 4% of African males, and less than 2% of indigenous American males, Australian males, and Polynesian males have red-green color deficiency (Birch, 2012). Comparatively, only about 0.4% of females from European Caucasian descent have red-green color deficiency (Birch, 2012).

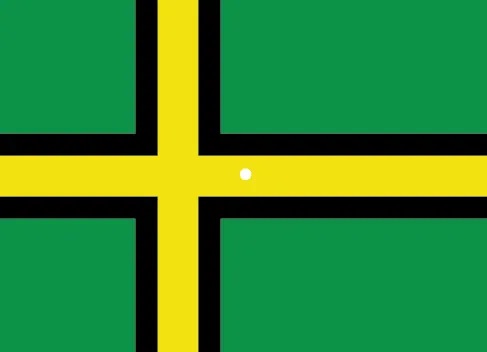

The trichromatic theory of color vision is not the only theory—another major theory of color vision is known as the opponent-process theory. According to this theory, color is coded in opponent pairs: black-white, yellow-blue, and green-red. The basic idea is that some cells of the visual system are excited by one of the opponent colors and inhibited by the other. So, a cell that was excited by wavelengths associated with green would be inhibited by wavelengths associated with red, and vice versa. One of the implications of opponent processing is that we do not experience greenish-reds or yellowish-blues as colors. Another implication is that this leads to the experience of negative afterimages. An afterimage describes the continuation of a visual sensation after removal of the stimulus. For example, when you stare briefly at the sun and then look away from it, you may still perceive a spot of light although the stimulus (the sun) has been removed. When color is involved in the stimulus, the color pairings identified in the opponent-process theory lead to a negative afterimage. You can test this concept using the flag in Figure 5.18.

But these two theories—the trichromatic theory of color vision and the opponent-process theory—are not mutually exclusive. Research has shown that they just apply to different levels of the nervous system. For visual processing on the retina, trichromatic theory applies: the cones are responsive to three different wavelengths that represent red, blue, and green. But once the signal moves past the retina on its way to the brain, the cells respond in a way consistent with opponent-process theory (Land, 1959; Kaiser, 1997).

Depth Perception

Our ability to perceive spatial relationships in three-dimensional (3-D) space is known as depth perception. With depth perception, we can describe things as being in front, behind, above, below, or to the side of other things.

Our world is three-dimensional, so it makes sense that our mental representation of the world has three-dimensional properties. We use a variety of cues in a visual scene to establish our sense of depth. Some of these are binocular cues, which means that they rely on the use of both eyes. One example of a binocular depth cue is binocular disparity, the slightly different view of the world that each of our eyes receives. To experience this slightly different view, do this simple exercise: extend your arm fully and extend one of your fingers and focus on that finger. Now, close your left eye without moving your head, then open your left eye and close your right eye without moving your head. You will notice that your finger seems to shift as you alternate between the two eyes because of the slightly different view each eye has of your finger.

A 3-D movie works on the same principle: the special glasses you wear allow the two slightly different images projected onto the screen to be seen separately by your left and your right eye. As your brain processes these images, you have the illusion that the leaping animal or running person is coming right toward you.

Although we rely on binocular cues to experience depth in our 3-D world, we can also perceive depth in 2-D arrays. Think about all the paintings and photographs you have seen. Generally, you pick up on depth in these images even though the visual stimulus is 2-D. When we do this, we are relying on a number of monocular cues, or cues that require only one eye. If you think you can’t see depth with one eye, note that you don’t bump into things when using only one eye while walking—and, in fact, we have more monocular cues than binocular cues.

An example of a monocular cue would be what is known as linear perspective. Linear perspective refers to the fact that we perceive depth when we see two parallel lines that seem to converge in an image (Figure 5.19). Some other monocular depth cues are interposition, the partial overlap of objects, and the relative size and closeness of images to the horizon. Can you think of some additional pictorial depth cues that artists use to make you see depth in a 2D painting or photograph?

DIG DEEPER: Stereoblindness

Bruce Bridgeman was born with an extreme case of lazy eye that resulted in him being stereoblind, or unable to respond to binocular cues of depth. He relied heavily on monocular depth cues, but he never had a true appreciation of the 3-D nature of the world around him. This all changed one night in 2012 while Bruce was seeing a movie with his wife.

The movie the couple was going to see was shot in 3-D, and even though he thought it was a waste of money, Bruce paid for the 3-D glasses when he purchased his ticket. As soon as the film began, Bruce put on the glasses and experienced something completely new. For the first time in his life he appreciated the true depth of the world around him. Remarkably, his ability to perceive depth persisted outside of the movie theater.

There are cells in the nervous system that respond to binocular depth cues. Normally, these cells require activation during early development in order to persist, so experts familiar with Bruce’s case (and others like his) assume that at some point in his development, Bruce must have experienced at least a fleeting moment of binocular vision. It was enough to ensure the survival of the cells in the visual system tuned to binocular cues. The mystery now is why it took Bruce nearly 70 years to have these cells activated (Peck, 2012).

the transparent covering over the eye

the small opening in the eye through which light passes, and the size of the pupil can change as a function of light levels as well as emotional arousal

the colored portion of the eye

a curved, transparent structure that serves to provide additional focus. The lens is attached to muscles that can change its shape to aid in focusing light that is reflected from near or far objects

The part of the retina where images are focused; contains cones

light-detecting cells. The cones are specialized types of photoreceptors that work best in bright light conditions. Cones are very sensitive to acute detail and provide tremendous spatial resolution. They also are directly involved in our ability to perceive color.

specialized photoreceptors that work well in low light conditions, and while they lack the spatial resolution and color function of the cones, they are involved in our vision in dimly lit environments as well as in our perception of movement on the periphery of our visual field

carries visual information from the retina to the brain

part of our visual field where the optic nerve leaves the eye, meaning we do not receive visual information for that area

information from the right visual field (which comes from both eyes) is sent to the left side of the brain, and information from the left visual field is sent to the right side of the brain.

part of the cerebral cortex associated with visual processing; contains the primary visual cortex

involved in object recognition and identification

involved with location in space and how one might interact with a particular visual stimulus

suggests that all colors in the spectrum can be produced by combining red, green, and blue. The three types of cones are each receptive to one of the colors

suggests that color is coded in opponent pairs: black-white, yellow-blue, and green-red

the continuation of a visual sensation after removal of the stimulus

depth cues that they rely on the use of both eyes

the slightly different view of the world that each of our eyes receives

depth cues that require only one eye, such as in 2D paintings or photographs

the fact that we perceive depth when we see two parallel lines that seem to converge in an image